Introduction to Electronic Calibration and Methods for Correcting Manufacturing Tolerances in Industrial Equipment Designs

Abstract

This tutorial discusses how the proper design of trim, adjustment, and calibration circuits can correct system tolerances, making industrial equipment safer, more accurate, and more affordable. Calibration subjects addressed include compensating for component tolerances, using final-test calibration, improving reliability through power-on self-test and continuous/periodic calibration, enabling accurate automated adjustments, replacing mechanical trims with all-electronic equivalents, and leveraging precision voltage references for digital calibration.

Making Industrial Equipment Accurate, Safe, and Affordable with Electronic Calibration

We demand safety in our factories. Customers expect quality products, which require accurate manufacturing equipment. At the same time, equipment must be affordable. How can manufacturers deliver "perfect" equipment at a reasonable price? In a word, calibration. Electronic calibration enables the remote calibration and testing of field devices such as sensors, valves, and actuators. Because field devices and programmable logic controllers (PLCs) are size constrained, they benefit from the small size of electronic calibration devices.

All practical components, both mechanical and electronic, have manufacturing tolerances. The more relaxed the tolerance, the more affordable the component. When components are assembled into a system, the individual tolerances sum to create a total system error tolerance. Through the proper design of trim, adjustment, and calibration circuits, it is possible to correct these system errors, thereby making equipment safe, accurate, and affordable.

Calibration can reduce cost in many areas. It can be used to remove manufacturing tolerances, specify less-expensive components, reduce test time, improve reliability, increase customer satisfaction, reduce customer returns, lower warranty costs, and speed product delivery.

Digitally controlled calibration devices and potentiometers (pots) are replacing mechanical pots in many factory settings. This digital approach results in better reliability and improved employee safety. This increased dependability can reduce product liability concerns. Another advantage is reduced test time and expense by removing human error. Automatic test equipment (ATE) can perform the test functions quickly and precisely, time after time. In addition, digital devices are insensitive to dust, dirt, and moisture, which can cause failure in mechanical pots.

Testing and calibration fall into three broad areas: production-line final testing, periodic self-testing, and continuous monitoring and readjustment. Practical products may use some or all of the above test methods.

Compensating for Component Tolerances Using Final-Test Calibration

Final-test calibration corrects for errors caused by the combined tolerances of many components. One or more adjustments may be required to calibrate the device under test (DUT) to meet a manufacturer's specifications.

To provide a simple example, we will say that this equipment uses resistors with five percent tolerance in several circuits. In design, we simulate the circuits and perform Monte Carlo testing. That is, we randomly change the resistor values within the tolerance limits to explore their effects on the output signal. The simulation results in a family of curves that show the worst-case errors that the resistor tolerances cause. With this knowledge, the designer decides to use the circuits as-is and to simply adjust the offset and span (gain) during final test to meet system specifications. So, we make measurements in the final production test and have a human set the span and offset using two mechanical pots. Calibration is complete, but have we solved the problem, masked the problem, or added a bigger unknown?

Experienced production engineers know human error is a real issue. Unintentional slips can ruin the best of plans. Asking a human to perform a boring, repetitive task is asking for problems. A better way is to automate such a task. Electrically adjustable calibration devices enable quick automatic testing, which improves repeatability, reduces cost, and enhances safety by removing the human-error factor.

Improving Reliability and Long-Term Stability by Power-On Self-Test and Continuous/Periodic Calibration

Manufacturing tolerances are compensated for by calibration during the final production test, and that data is utilized when a system is powered up. Environmental parameters in the field also create a need for test and calibration. Such environmental factors include temperature, humidity, and circuit component aging (drift), which result in signal span and offset errors. Some circuits contain control or average information, which can be periodically memorized. These factors are accounted for with a combination of self-test at power-up and periodic or continuous testing. The field testing may be as simple as sensing temperature and compensating accordingly, or they may be more complex.

Many products include an internal microprocessor, which can aid testing. For example, a weight scale can compensate for the weight of the product package, such as a plastic bag or glass jar. Subtracting the weight of the package (tare weight) from the gross weight is necessary to accurately measure the net weight of the material on the scale. Because the weight of the package may change over time due to manufacturing variation or a change of vendors, it is desirable to update the tare or container weight from time to time.

Another example is using a switch to short an amplifier input to ground to measure offset voltage. This could be done during power-on self-test to compensate for component aging. Alternatively, it can be performed periodically to compensate for temperature-induced drift. If the temperature drift is predictable and repeatable, a microprocessor can aid testing by measuring temperature and controlling the calibration device in an open-loop manner.

System gain errors can be calibrated by switching a known signal into the equipment at an early stage and measuring the output level. This is done at power-up or periodically during lulls in operation.

Enabling Accurate Automated Adjustments with Calibration DACs and Pots

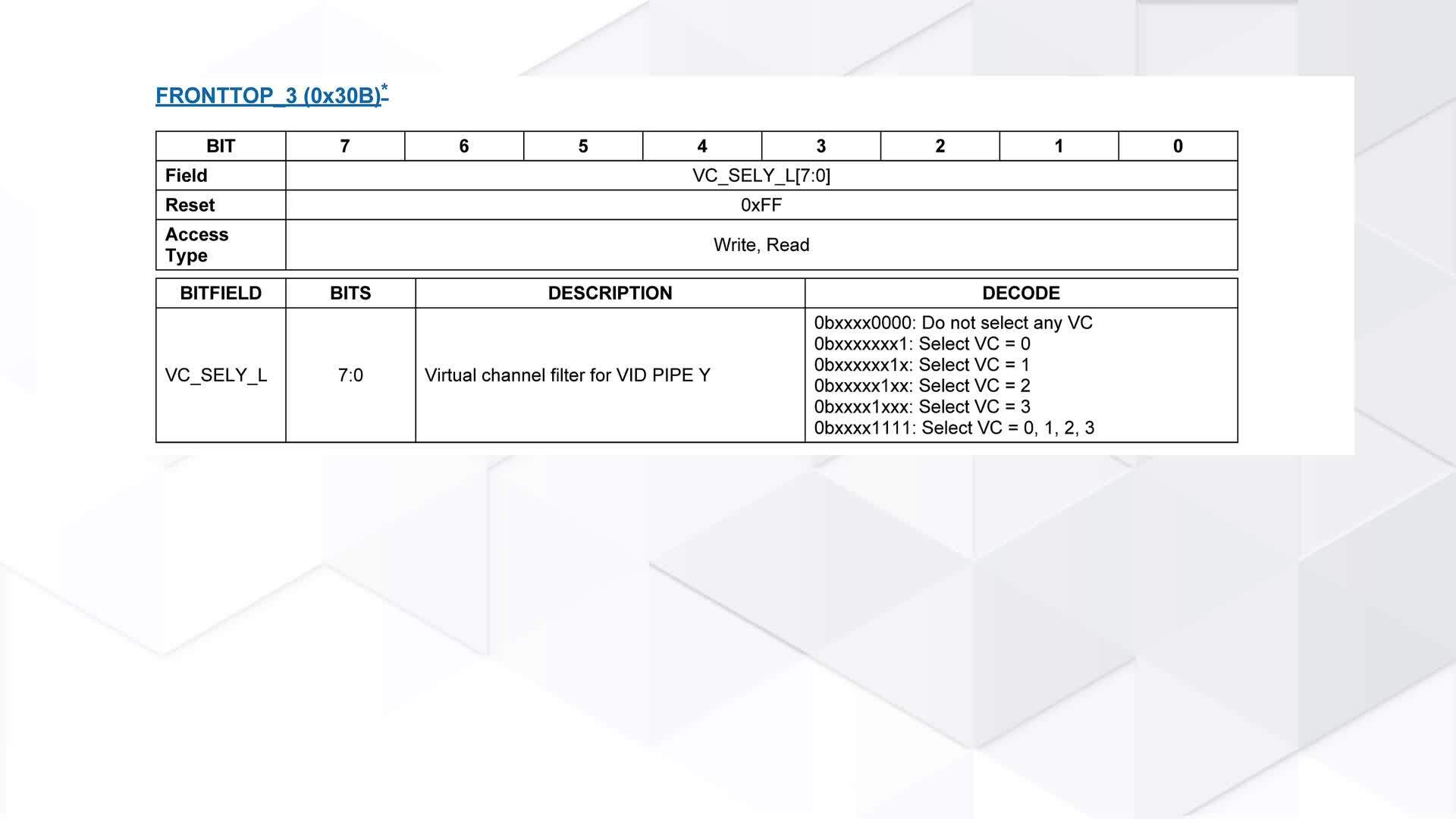

Calibration digital-to-analog converters (CDACs) and calibration digital pots (CDPots) share some unique attributes that enable trimming, adjustment, and calibration. The first advantage is internal nonvolatile memory, which automatically restores the calibration setting during power-up. Figure 1 illustrates a second advantage: the ability to customize the calibration granularity and location for industrial safety.

Figure 1. Comparing the calibration range of an ordinary DAC to a CDAC.

Ordinary DACs allow a single reference voltage (VREF) to be applied; this reference voltage usually becomes the highest DAC setting. The lowest DAC setting is a fixed voltage, typically ground. For a near-center adjustment, much of this range between VREF and ground must be ignored and not used, since the available step size is evenly distributed over the range. For example, with VREF set to 4V, a 10-bit DAC yields a step size of 0.0039V per step. It is critical in industrial equipment to remove all safety-related errors. Removing the unused adjustment range eliminates any possibility that the circuit could be grossly misadjusted.

The CDAC and CDPot allow both the top and bottom DAC voltage to be set to arbitrary voltages, thus removing excess adjustment range. In Figure 1, a low value of 1V and a high value of 2V are selected as examples. To achieve a 0.0039V step size over the 1V to 2V range, only an 8-bit device is needed, which saves cost. Additionally, this increases safety by removing any possibility that the circuit could be misadjusted. The high and low voltages for the CDAC are arbitrary and, therefore, can be centered wherever the circuit calibration is required. If the tolerance analysis for the circuit indicates that a range of 1.328V to 1.875V is needed for calibration, it can be accommodated. The 256-step device would yield a granularity of 0.00214V. Thus, the granularity of the adjustment can be optimized for the specific application.

Reducing Cost and Improving Accuracy by Replacing Mechanical Trims with All-Electronic Equivalents

Digitally controlled adjustable devices offer several advantages over mechanical devices in industrial systems. The largest advantage is lower cost. ATE can perform calibration precisely time after time, thereby eliminating the considerable costs associated with error-prone manual adjustments. Also, digital pots allow periodic testing to occur more frequently or over longer equipment lifespans, since they can guarantee 50,000 writing cycles. The best mechanical pots can support only a few thousand adjustments.

Location flexibility and size are other advantages compared to mechanical pots. Digitally adjustable pots can be mounted on the circuit board directly in the signal path, exactly where they are needed. In contrast, mechanical pots may require human access, which can necessitate long circuit traces or coaxial cables. In sensitive circuits, the capacitance, time delay, or interference pickup of these cables can reduce equipment performance.

Digital pots also maintain their calibration values better over time, whereas mechanical pots can continue to experience small movements even after they are sealed. The wiper will move as the wiper spring relaxes, when the pot is temperature cycled, or when the pot is subjected to shipping vibration. Calibration values stored in digital pots are not affected by these factors.

A one-time programmable (OTP) CDPot can be used for extra safety. It permanently locks in the calibration setting, preventing an operator from making further adjustments. To change the calibration value, one must physically replace the OTP CDPot. A special variant of the OTP CDPot always returns to its stored value upon power-on reset, while allowing operators to make limited adjustments during operation at their discretion.

Leveraging Precision Voltage References for Digital Calibration

Sensor and voltage measurements with precision analog-to-digital converters (ADCs) are only as good as the voltage reference used for comparison. Likewise, output control signals are only as accurate as the reference voltage supplied to the DAC, amplifier, or cable driver.

Common power supplies are not adequate to act as precision voltage references. Typical power supplies are only five to ten percent accurate; they change with load and line changes; and they tend to be noisy.

Compact, low-power, low-noise, and low-temperature-coefficient voltage references are affordable and easy to use. In addition, some references have internal temperature sensors to aid in the tracking of environmental variations.

In general, there are three kinds of serial calibration voltage references (CRefs), each of which offers unique advantages for different factory applications. Having a choice of serial voltage references enables the designer to optimize and calibrate his exact circuits.

The first type of reference enables a small trim range, typically three to six percent; this is an advantage for gain trim in industrial imaging systems. For instance, coupling a video DAC with a trimmable CRef allows the overall system gain to be fine-tuned by simply adjusting the CRef voltage.

The second type is an adjustable reference that allows adjustment over a wide range (e.g., 1V to 12V), which is advantageous for field devices that have wide-tolerance sensors and that must operate on unstable power. Portable maintenance devices may need to operate from batteries, automotive power, or emergency power generators.

The third type, called an E2CRef, integrates memory, allowing a single-pin command to copy any voltage between 0.3V and [VIN - 0.3V] and, then, to infinitely hold that level. E2CRefs benefit test and monitoring instruments that need to establish a baseline or warning-alert threshold.

Figure 2 illustrates the production advantages of using an E2CRef. In this example, a power-supply manufacturer uses an E2CRef to create an affordable power supply that stores the setting established during final production test. The manufacturer builds a generic power supply and places it into a holding inventory. When a customer order is received, the output voltage is adjusted by an automated test system before the order is shipped.

2CRef." class="img-responsive"/>

2CRef." class="img-responsive"/>

Figure 2. Illustrating the manufacturing benefits of using an E2CRef.

The power-supply manufacturer leverages final-test calibration to provide two real benefits. First, he reduces cost by using individual components with relaxed tolerances, as the final-test calibration corrects for cumulative errors. Second, he provides faster delivery to the customer by making custom adjustments to a standard product.

"Just-in-time" inventory control is more important today than it has ever been because getting the order may hinge on quick delivery time. Winning an order when a competitor fails to deliver can lead to repeat orders. Plus, increasing inventory turns increased profit directly to the bottom line.

Summary

Calibration is a means to an end. Practical devices have manufacturing component tolerances that can be calibrated out during final production test with laboratory-grade external test equipment. Environmental drift due to time, humidity, or temperature requires field calibration. Electronically adjustable calibration parts allow quick field calibration including power on self-test and continuous or periodic calibration. Periodic calibration may also include calibration against laboratory equipment with standards traceable to a recognized standards body. Electronic calibration helps us meet our goal; it allows us to have affordable industrial equipment that is also safe and accurate.

Related to this Article

Products

PRODUCTION

Electronically Programmable Voltage Reference

PRODUCTION

Low-Noise, Precision, +2.5V/+4.096V/+5V Voltage Reference

PRODUCTION

SOT23, Low-Cost, Low-Dropout, 3-Terminal Voltage References

Dual Temperature-Controlled NV Digital-to-Analog Converters

Nonvolatile, Quad, 8-Bit DACs with 2-Wire Serial Interface

Nonvolatile, Quad, 8-Bit DACs

Nonvolatile, Quad, 8-Bit DACs with 2-Wire Serial Interface

Nonvolatile, Quad, 8-Bit DACs

Nonvolatile, Dual, 8-Bit DACs with 2-Wire Serial Interface

10-Bit, Nonvolatile, Linear-Taper Digital Potentiometers

256-Tap, Nonvolatile, SPI-Interface, Digital Potentiometers

Dual, 256-Tap, Nonvolatile, I²C-Interface, Digital Potentiometers

High-Voltage NV I²C Potentiometer

32-Tap, One-Time Programmable, Linear-Taper Digital Potentiometers

High-Precision Voltage References with Temperature Sensor