Bridging GMSL and Ethernet for High Performance and RFC-Compliant Multicamera Video Streaming

Bridging GMSL and Ethernet for High Performance and RFC-Compliant Multicamera Video Streaming

2026-05-11

摘要

This article presents how Gigabit Multimedia Serial Link (GMSL™) technology can be seamlessly bridged to the Ethernet domain to create a complete RFC-compliant high speed and low latency multicamera video streaming chain. To benefit from high performance, power efficient, and flexible architecture, the whole high speed video streaming pipeline was realized by leveraging FPGA resources.

Introduction

Industrial automation through autonomous robots1 and autonomous driving2 concepts are considered to be among the most important emerging technologies of the 2020s. One of the key components of those implementations is video transmission, exposing real-time information about the robot or vehicle’s surroundings. In that way, the Gigabit Multimedia Serial Link (GMSL™) technology serves as a key pillar that can deliver a cost-effective high speed video link over a single wire between multiple camera modules and host processing-related system on chip (SoC). On the other hand, as most of the devices used in diverse autonomous elements are networked, Ethernet standard became a pivotal data transmission methodology by exposing high speed implementation variants. Thus, video streaming technology plays a crucial role by ensuring a high speed and standardized transmission pipeline of the cameras’ pixels between the host SoC and other networked devices from the environment. The most important aspects that are taken into consideration for video streaming are the high speed and low latency behaviors. In this way, the FPGA device represents the best option due to its parallel processing and implementation flexibility. This article describes how high efficient video line to IP network packet translation and real-time transport protocol (RTP)-based video distribution can be achieved by leveraging entirely FPGA resources. To showcase the presented approach, a multi-GMSL camera video streaming scenario can be used, relying on the high performance, low latency, and cost-efficient ADRD8012-01Z edge compute platform.

GMSL Technology and RTP Specification

ADI’s GMSL technology represents a key cost-efficient and scalable SERDES solution designed for transferring real-time video data, control information, and power over a single cable. It comes with two architectural variants: one for the highly reliable and serial link between camera modules and embedded SoC systems and one between embedded SoC and display devices. Widely adopted in the automotive sector, camera-based GMSL technology currently serves as crucial technology for autonomous driving by offering efficient high speed video links from multiple zones of the car by using as few cables as possible. Moreover, it is also beneficial in several applications from robotics, industrial, instrumentation, and healthcare markets.

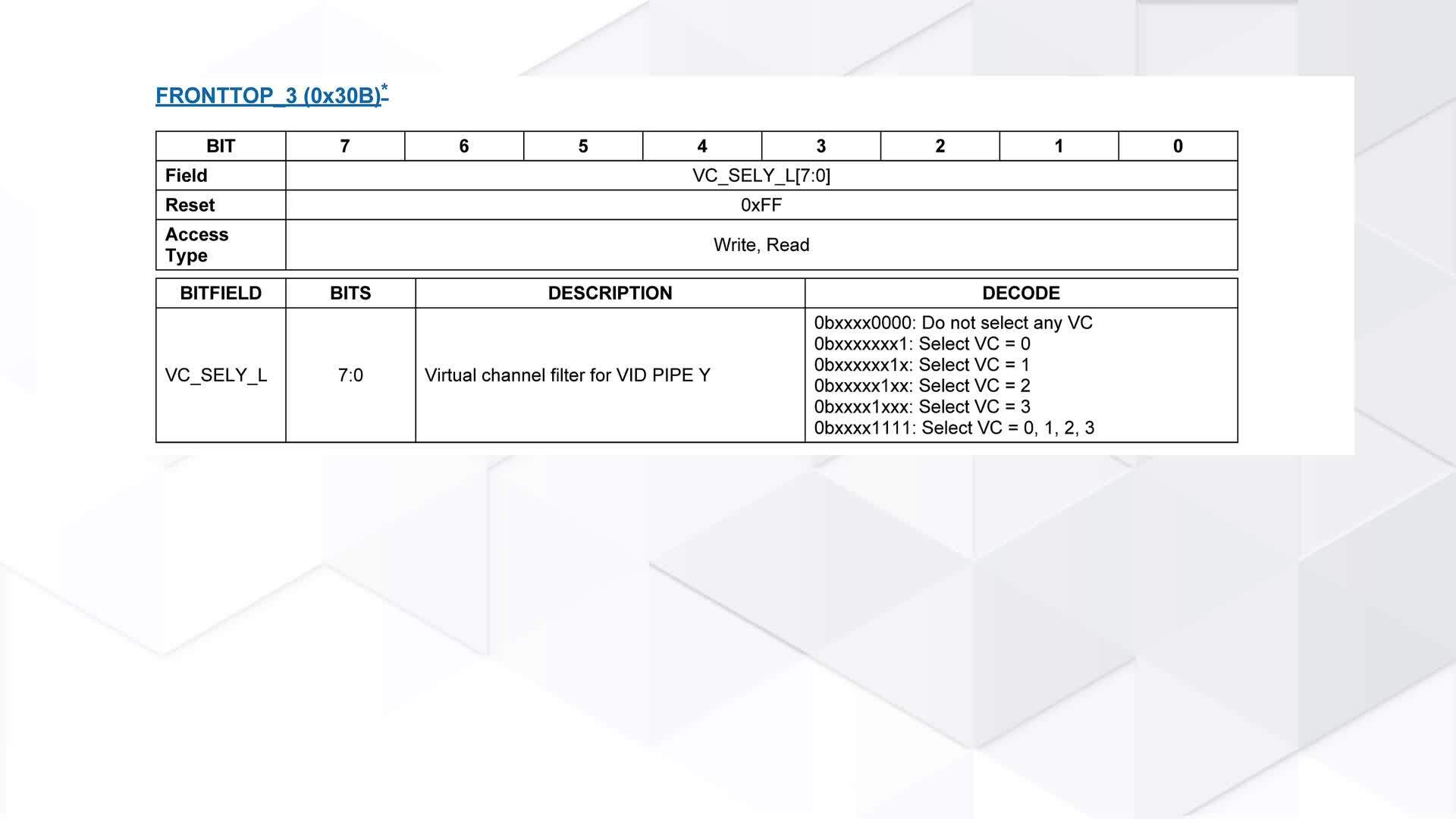

To obtain the previously mentioned single-wire transmission, a high performance SERDES technology was defined, featuring two devices as main components: a GMSL serializer and a GMSL deserializer. In the camera context, the serializer device has the role of translating the high speed parallel video data that comes from the camera serial interface version 2 (CSI-2)3 camera output interface. The next element in the GMSL transmission engine, the high speed serial link, features a speed of up to 3 Gbps in GMSL1, up to 6 Gbps in GMSL2, and up to 12 Gbps in GMSL3, using each cable option: coaxial cable or shielded twisted pair (STP). Finally, the deserializer unpacks the data that comes from multiple serializer devices and then translates it to one or more CSI-2 output interfaces. To realize the time divided transmission, the GMSL devices make use of the virtual channel feature proposed in the MIPI CSI-2 specification. The virtual channel identifier represents a simplified data interleaving mechanism that enables the receiver to easily interpret the frames received from multiple video sources by using the assigned ID value.

Figure 1 depicts a typical connection between four GMSL-enabled cameras and the CSI-2 receiver logic integrated in the FPGA region of a Xilinx SoC, making use of a CSI-2 receiver and an I2C controller to handle both video and control data.

RTP4 represents the most common specification for live video streaming over IP networks. It typically relies on a low latency connectionless transport mechanism by using the user datagram protocol (UDP)5 and defines several packetization schemes to cover multiple video payload formats. Figure 2 illustrates a generic RTP packet for uncompressed video,6 featuring a general RTP header, payload header, and a specific number of partial or full video lines. As RTP protocol serves as an application layer implementation, it features all the required fields that can deeply define the characteristics of the payload data, in this case line, video type, or end-of-frame fields being some of the application-specific fields.

Connectionless Network Stacks Using FPGAs vs. CPU Subsystems

As autonomy evolves in the 2020s, there is a huge demand for innovative solutions in several markets such as autonomous driving or industrial automation through robotics. Besides the use of diverse sensor networks and complex AI algorithms, high speed and low latency video streaming serves as one of the critical parts when talking about autonomous driving or intelligent robots like humanoids. In this case, the real-time delivery of video data represents the most important aspect to be considered, or leading to the necessity of relying on connectionless transport methodologies. The previously mentioned RTP serves as a key pillar when talking about real-time video streaming implementations, mostly designed in conjunction with the UDP transport specification and over an IP network.

Even if the connectionless video streaming results in significantly lower resource consumption and higher performance than the connection-oriented designs based on a TCP/IP stack, it can be further improved, as the video streaming in the automotive and industrial domain contexts is mostly realized between embedded systems that contain a direct hardware (HW) connection to the video sources. This improvement can be obtained using various HW solutions such as a high performance CPU subsystem as used in data centers. While these computing devices can address the performance gaps in typical video streaming pipelines, their cost and integration complexity make them unsuitable for embedded SoC environments, which require optimized components for both energy efficiency and performance. Other than that, GPU devices offer an alternative for high performance computing, leveraging on tremendously parallel operations, but with a lower degree of HW-level flexibility.

In this way, the highly reconfigurable FPGAs became an important component when talking about high performance networking and real-time video streaming. To maximize edge computing performance, current embedded SoCs combine CPU subsystems, FPGA resources, and RAM on a single chip. Thus, these kinds of devices can serve as the best computing option for real-time video streaming, being capable of realizing the video data reception, doing the translation between the received video pixels and the network packets, and realizing the video streaming with minimal CPU processing, used for protocol fields configuration. Hence, complete video data to network packets translation and connectionless video streaming can be realized by providing the highest possible performance from both latency and data rate perspectives, even if one or multiple camera modules are used. To obtain the best transmission performance and fit into the available FPGA resources, this network stack architectural design features a full request for comment (RFC)-compliant application layer protocol and a minimal software (SW)-controllable design for the transport, network, and data link layers’ specifications. Thereby, traditional full-fledged IP routing implementation is replaced by manual and simplified field configuration for the IP addresses assignment.

Video to Network Domain Translation

As previously mentioned, GMSL technology aims to serialize the video data that comes from multiple camera modules and then output the concatenated streams over a standardized implementation like CSI-2 to an embedded SoC. To realize the concatenation, it leverages the virtual channel interleaving mechanism, using the available storage capabilities from the GMSL SERDES devices. This article describes a high performance and low latency uncompressed video line to IP network packet translation. The standardized RTP-based video streaming stack was chosen due to the minimal header design and the connectionless transport layer option using UDP. By using such an approach, the video streaming stack is compatible with many other SW or HW implementations of the receiving side, which are completely compliant with the RTP RFCs.

Even if the RTP for uncompressed video specification includes an architecture with partial lines, the best approach for an HW-powered implementation is the design without fragmentation, thus obtaining significantly lower resource usage along with a minimization of power consumption.

As described earlier, there are several compute machines that can do video to network domain translation. Moreover, there are multiple different data transmission approaches. Figures 3, 4, and 5 present possible memory-based data transmissions using CPU/GPU/FPGA-based embedded systems and this article’s FPGA-only video streaming stack architecture.

Figure 3 illustrates the options that can be chosen when implementing a video streaming-related networking stack relying on a classical HW/SW codesign. Thereby, the left side contains the video receiver subsystem that then sends the video lines to the RAM memory of the embedded system using a direct memory access (DMA) controller. Then, the video data is read from memory and transmitted to the Ethernet Media Access Controller (MAC) by using one of the following network stack design mechanisms: (1) Data Plane Development Kit (DPDK)7 or (2) a traditional implementation relying on the Linux kernel networking.8 Finally, the Ethernet MAC then forwards the network packets to the Ethernet PHY attached.

Figure 4 describes how a remote direct memory access (RDMA)-based transmission chain can be implemented, showcasing the specific RDMA-enabled network-interface card (NIC) requirement for both sender and receiver sides. As listed in the diagram, the underlying layer 2 implementation is based on the Ethernet standard. This example chain is an overview that presents how an RDMA over converged Ethernet (RoCE)9 transmission can be made.

Figure 5 presents an overview of this article’s approach, exposing the most important components: the FPGA-based video lines to RTP packets conversion and the connectionless network stack modules.

Figure 6 describes the translation of CSI-2 video data to a complete RTP packet translation. To efficiently design the conversion, the 1 line to 1 RTP packet correspondence was chosen, representing the best solution from both resource usage and Ethernet standard perspectives. A typical Ethernet frame is based on the specified maximum transmission unit (MTU), a measurement unit used in production NICs, having a range of 1500 (normal) or 9000 (jumbo frames) bytes, and up to a theoretical maximum of 65536 (super jumbo frames) for experimental use cases. As a video line represents thousands of bytes, the 1 line-based architecture was selected to comply with most of the receivers’ NIC design. As seen before, the SW-only or HW/SW codesigned translation of video data to RTP packets would go through multiple memory read/write operations on both video and networking SW subsystems, adding complexity and degrading performance through high latency. In this full HW approach, the implementation’s complexity is minimized, containing a single storage mechanism for the transfer period of each line, thereby ensuring a nonfragmented packet that is then sent through the wire. On the other hand, this extremely simplified mechanism is valid only for the translation of data from a group of timely divided video streams using virtual channel capability. In case of using multiple parallel groups of timely divides video streams, a new storage level needs to be added to the transmission hierarchy, thus creating a timely divided transmission that goes to the networking subsystem.

As shown in Figure 6, the translation flow contains two steps: (1) data acquisition from the CSI-2 receiver implementation and the AXIS-compliant one video line-based packet creation, and (2) the creation of the RTP packet by appending the protocols’ headers to the video line.

ADI GMSL to 10 GbE Edge Compute Platform

To benefit from the advantages that a full FPGA-accelerated RTP video streaming design can offer, ADI provides the ADRD8012-01Z edge compute platform. It represents a high performance multi GMSL camera to 10 Gigabit Ethernet (GbE) converter built around the cost-effective AMD-Xilinx’s K26 SoM. The HW setup features two quad MAX96724 GMSL deserializers that can accommodate an in-parallel video streaming of up to eight cameras, indicated as two blocks of four virtual channels (VCs – VC0 to VC3) before the deserializer. Figure 7 presents the full video streaming chain. On the networking side, the platform brings a small form-factor pluggable plus (SFP+) cage that can be used to accomplish a 10 GbE-based network transmission. The camera modules that were tested with this product’s video streaming implementations are the following: the automotive-grade Tier IV’s C1 and C2, featuring a high dynamic range of 120 dB and 2.5 MP/5.4 MP resolutions, and the Intel RealSense D457. In addition, the modules contain an integrated image sensor processor (ISP) attached to the corresponding image sensor, thereby being able to transmit full color image based on the YUV422 specification as an example. As previously mentioned, in the context of video streaming, the application layer specification serves as the foundation for the exposure of frames’ structure. In this case, of uncompressed video transmission, the RTP payload header6 defines the length of each payload field, indicating the actual line length, the line number, and other fragmentation-related elements that are not considered in this article’s approach. On the other hand, the general RTP header4 illustrates the video type and the marker identifier, which specifies the last byte of a video frame, being perfectly matched to the Arm® eXtensible Interface Streaming (AXIS)’s TVALID/TLAST logic.

Conclusion

This article illustrated how a high speed GMSL-powered local video transmission can be efficiently extended to high performance video streaming infrastructure by maintaining the same data rate performance and offering low latency behavior. To accomplish this robust and efficient architecture, the FPGA SoC device type was chosen. Offering both CPU and FPGA resources, the selected device combined both software and hardware capabilities to create a superior multicamera real-time video streaming engine.

References

1“Jobs of Tomorrow: Technology and the Future of the World’s Largest Workforces.” World Economic Forum, October 2025.

2“Autonomous Vehicles: Timeline and Roadmap Ahead.” World Economic Forum, April 2025.

3MIPI CSI-2 Specification. MIPI Alliance.

4Henning Schulzrinne, Stephen Casner, Ron Frederick, and Van Jacobson. “RTP: A Transport Protocol for Real Time Applications.” The Internet Society, July 2003.

5Jon Postel. “User Datagram Protocol.” Information Sciences Institute, August 1980.

6Ladan Gharai and Charles Perkins. “RTP Payload Format for Uncompressed Video.” The Internet Society, September 2005.

7DPDK Landing Page. DPDK.

8“Linux Kernel Networking Documentation.” The Kernel Development Community.

9“RDMA over Converged Ethernet.” Wikipedia.org.

关于作者

Alin-Tudor Sferle is a senior FPGA design engineer at Analog Devices in the Software and Security Group. He joined ADI in February 2021 and currently works on HW/SW codesign for FPGA-based solutions in the vision and netwo...